Artificial intelligence used to be reserved for the population’s most brilliant scientists and isolated in the world’s top laboratories. Now, AI is available to anyone with an internet connection. Tools like ChatGPT, Voice.ai, DALL-E, and others have brought AI into daily life, but sometimes the terms used to describe their capabilities and inner workings are anything but mainstream.

Here are 10 common terms you’ll likely hear in the same sentence as your favorite AI tool, on the nightly news, or by the water cooler. Keep this AI dictionary handy to stay informed about this popular (and sometimes controversial) topic.

AI-generated Content

AI-generated content is any piece of written, audio, or visual media that was created partially or completely by an artificial intelligence-powered tool.

If someone uses AI to create something, it doesn’t automatically mean they cheated or irresponsibly cut corners. AI is often a great place to start when creating outlines, compiling thought-starters, or seeking a new way of looking at a problem.

AI Hallucination

When your question stumps an AI, it doesn’t always admit that it doesn’t know the answer. So, instead of not giving an answer, it’ll make one up that it thinks you want to hear. This made-up answer is known as an AI hallucination.

One real-world case of a costly AI hallucination occurred in New York where a lawyer used ChatGPT to write a brief. The brief seemed complete and cited its sources, but it turns out that none of the sources existed. It was all a figment of the AI’s “imagination.”

Black Box

To understand the term black box, imagine the AI as a system of cogs, pulleys, and conveyor belts housed within a box. In a see-through box, you can see how the input is transformed into the final product; however, some AIs are referred to as a black box. That means you don’t know how the AI arrived at its conclusions. The AI completely hides its reasoning process. A black box can be a problem if you’d like to double-check the AI’s work.

Deepfake

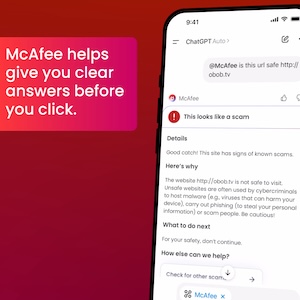

Deepfake is the manipulation of a photo, video, or audio clip to portray events that never happened. Often used for humorous social media skits and viral posts, unsavory characters are also leveraging deepfake to spread fake news reports or scam people.

For example, people are inserting politicians into unflattering poses and photo backgrounds. Sometimes the deepfake is intended to get a laugh, but other times the deepfake creator intends to spark rumors that could lead to dissent or tarnish the reputation of the photo subject. One tip to spot a deepfake image is to look at the hands and faces of people in the background. Deepfakes often add or subtract fingers or distort facial expressions.

AI-assisted audio impersonations – which are considered deepfakes – are also rising in believability. According to McAfee’s “Beware the Artificial Imposter” report, 25% of respondents globally said that a voice scam happened either to themselves or to someone they know. Seventy-seven percent of people who were targeted by a voice scam lost money as a result.

Deep Learning

The closer an AI’s thinking process is to the human brain, the more accurate the AI is likely to be. Deep learning involves training an AI to reason and recall information like a human, meaning that the machine can identify patterns and make predictions.

Explainable AI

Explainable AI – or white box – is the opposite of black box AI. An explainable AI model always shows its work and how it arrived at its conclusion. Explainable AI can boost your confidence in the final output because you can double-check what went into the answer.

Generative AI

Generative AI is the type of artificial intelligence that powers many of today’s mainstream AI tools, like ChatGPT, Bard, and Craiyon. Like a sponge, generative AI soaks up huge amounts of data and recalls it to inform every answer it creates.

Machine Learning

Machine learning is integral to AI because it lets the AI learn and continually improve. Without explicit instructions to do so, machine learning within AI allows the AI to get smarter the more it’s used.

Responsible AI

People must not only use AI responsibly, but the people designing and programming AI must do so responsibly, too. Technologists must ensure that the data the AI depends on is accurate and free from bias. This diligence is necessary to confirm that the AI’s output is correct and without prejudice.

Sentient

Sentient is an adjective that means someone or something is aware of feelings, sensations, and emotions. In futuristic movies depicting AI, the characters’ world goes off the rails when the robots become sentient, or when they “feel” human-like emotions. While it makes for great Hollywood drama, today’s AI is not sentient. It doesn’t empathize or understand the true meanings of happiness, excitement, sadness, or fear.

So, even if an AI composed a short story that is so beautiful it made you cry, the AI doesn’t know that what it created was touching. It was just fulfilling a prompt and used a pattern to determine which word to choose next.