Artificial intelligence (AI) is making its way from high-tech labs and Hollywood plots into the hands of the general population. ChatGPT, the text generation tool, hardly needs an introduction and AI art generators (like Midjourney and DALL-E) are hot on its heels in popularity. Inputting nonsensical prompts and receiving ridiculous art clips in return is a fun way to spend an afternoon.

However, while you’re using AI art generators for a laugh, cybercriminals are using the technology to trick people into believing sensationalist fake news, catfish dating profiles, and damaging impersonations. Sophisticated AI-generated art can be difficult to spot, but here are a few signs that you may be viewing a dubious image or engaging with a criminal behind an AI-generated profile.

What Are AI Art Generators and Deepfakes?

To better understand the cyberthreats posed by each, here are some quick definitions:

- AI art generators. Generative AI is typically the specific type of AI behind art generators. This type of AI is loaded with billions of examples of art. When someone gives it a prompt, the AI flips through its vast library and selects a combination of artworks it thinks will best fulfill the prompt. AI art is a hot topic of debate in the art world because none of the works it creates are technically original. It derives its final product from various artists, the majority of whom haven’t granted the computer program permission to use their creations.

- Deepfake. A deepfake is a manipulation of existing photos and videos of real people. The resulting manipulation either makes an entirely new person out of a compilation of real people, or the original subject is manipulated to look like they’re doing something they never did.

AI art and deepfake aren’t technologies found on the dark web. Anyone can download an AI art or deepfake app, such as FaceStealer and Fleeceware. Because the technology isn’t illegal and it has many innocent uses, it’s difficult to regulate.

How Do People Use AI Art Maliciously?

It’s perfectly innocent to use AI art to create a cover photo for your social media profile or to pair it with a blog post. However, it’s best to be transparent with your audience and include a disclaimer or caption saying that it’s not original artwork. AI art turns malicious when people use images to intentionally trick others and gain financially from the trickery.

Catfish may use deepfake profile pictures and videos to convince their targets that they’re genuinely looking for love. Revealing their real face and identity could put a criminal catfish at risk of discovery, so they either use someone else’s pictures or deepfake an entire library of pictures.

Fake news propagators may also enlist the help of AI art or a deepfake to add “credibility” to their conspiracy theories. When they pair their sensationalist headlines with a photo that, at quick glance, proves its legitimacy, people may be more likely to share and spread the story. Fake news is damaging to society because of the extreme negative emotions they can generate in huge crowds. The resulting hysteria or outrage can lead to violence in some cases.

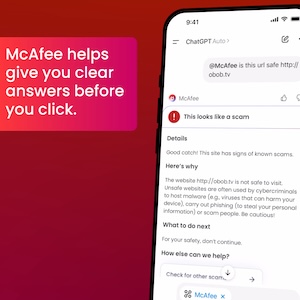

Finally, some criminals may use deepfake to trick face ID and gain entry to sensitive online accounts. They can make it appear as if someone you trust said something different from what they actually said. Try out McAfee Deepfake Detector as your defense against malicious and misleading deepfakes.

To prevent someone from deepfaking their way into your accounts, protect your accounts with multifactor authentication. That means that more than one method of identification is necessary to open the account. These methods can be one-time codes sent to your cellphone, passwords, answers to security questions, or fingerprint ID in addition to face ID.

3 Ways to Spot Fake Images

Before you start an online relationship or share an apparent news story on social media, scrutinize images using these three tips to pick out malicious AI-generated art and deepfake.

1. Inspect the context around the image.

Fake images usually don’t appear by themselves. There’s often text or a larger article around them. Inspect the text for typos, poor grammar, and overall poor composition. Phishers are notorious for their poor writing skills. AI-generated text is more difficult to detect because its grammar and spelling are often correct; however, the sentences may seem choppy.

2. Evaluate the claim.

Does the image seem too bizarre to be real? Too good to be true? Extend this generation’s rule of thumb of “Don’t believe everything you read on the internet” to include “Don’t believe everything you see on the internet.” If a fake news story is claiming to be real, search for the headline elsewhere. If it’s truly noteworthy, at least one other site will report on the event.

3. Check for distortions.

AI technology often generates a finger or two too many on hands, and a deepfake creates eyes that may have a soulless or dead look to them. Also, there may be shadows in places where they wouldn’t be natural, and the skin tone may look uneven. In deepfaked videos, the voice and facial expressions may not exactly line up, making the subject look robotic and stiff.

Boost Your Online Safety With McAfee

Fake images are tough to spot, and they’ll likely get more realistic the more the technology improves. Awareness of emerging AI threats better prepares you to take control of your online life. There are quizzes online that compare deepfake and AI art with genuine people and artworks created by humans. When you have a spare ten minutes, consider taking a quiz and recognizing your mistakes to identify malicious fake art in the future.

To give you more confidence in the security of your online life, partner with McAfee. McAfee+ Ultimate is the all-in-one privacy, identity, and device security service. Protect up to six members of your family with the family plan, and receive up to $2 million in identity theft coverage. Partner with McAfee to stop any threats that sneak under your watchful eye.