YouTube videos are like today’s candid camera: they can be fun, educational, and, sometimes, a window into someone else’s most embarrassing moments. But videos we watch could just as easily flip the tables and allow intrusions into our own lives. How? By containing secret instructions capable of triggering voice-recognition tools on our smartphones. It just takes a few well-placed words in the video’s audio, and our devices can be ready to obey. After all, it only takes “Ok Google.”

Researchers at the University of California, Berkeley and Georgetown University demoed this attack on, well, YouTube. The demo shows how a heavily modified voice command can trigger the capabilities of Google Now, the personal assistant on Android devices. Once activated, the phone’s program can perform a number of functions. According to the scholars who demonstrated the technique, the concern is that these types of sounds can be embedded in videos and on platforms like YouTube.

This works because smartphones process sound differently from a human ear. By manipulating a sample of a real voice saying “Ok Google,” perpetrators can create a noise that a mobile’s personal assistant recognizes, even if it seems strange to us.

Consequently, cybercriminals could, potentially, give orders to a smartphone without the user’s knowledge. One of the researchers, Micha Sherr, demonstrated this technique by opening his Facebook app during an interview with CNBC. That’s just the tip of the iceberg. This attack can leverage any command possible with vocal-recognition. For example, researchers believe this method could prompt a smartphone to visit a website containing malware — potentially without alerting the user.

Luckily, this attack isn’t likely to become widespread. So far, researchers have only demonstrated its potential – criminals haven’t actually been discovered using it. Additionally, there are easier methods for crooks to implement on a mass-scale. However, the discovery is poignant, given the prominence of vocal-recognition today.

As devices become our personal assistants – whether Android, iPhone, or Amazon Echo – we will be relying on their capabilities more and more. Consumers should have an awareness of associated safety issues. After all, who wants to worry while watching video compilations of people falling?

Learn these tips to use voice-recognition safely:

- Turn off always-on mode. Many devices default to an always-on mode. The microphone can be on constant alert, listening for audio keywords such as “Ok Google.” However, this leaves an open door for attacks, so change the setting. Enable voice recognition only when you plan on using it.

- Be careful of what you record. Criminals aren’t likely to scour the internet for recordings of people’s voices, but still, why leave yourself exposed? Uploaded recordings are easily-obtained audio samples, if you do become targeted. Not to mention, reducing your digital footprint is a good idea in general, if you want to safely use any form of biometric security.

- Don’t click on suspicious videos. It’s safer to watch videos from reputable sources, or with millions of views. But let’s be honest. Sometimes that mysterious video with 27 views has a really good headline. Try to avoid these. And if you really can’t resist, be aware of your devices’ behaviors as you watch.

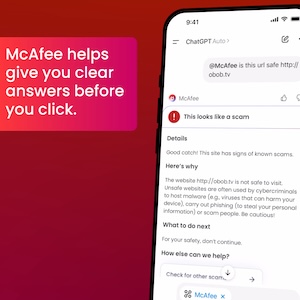

And, of course, stay on top of the latest consumer and mobile security threats by following me and @McAfee on Twitter, and ‘Like’ us on Facebook.