Authored by Lakshya Mathur and Abhishek Karnik

As the world gears up for the 2024 Paris Olympics, excitement is building, and so is the potential for scams. From fake ticket sales to counterfeit merchandise, scammers are on the prowl, leveraging big events to trick unsuspecting fans. Recently, McAfee researchers uncovered a particularly malicious scam that not only aims to deceive but also to portray the International Olympics Committee (IOC) as corrupt.

This scam involves sophisticated social engineering techniques, where the scammers aim to deceive. They’ve become more accessible than ever thanks to advancements in Artificial Intelligence (AI). Tools like audio cloning enable scammers to create convincing fake audio messages at a low cost. These technologies were highlighted in McAfee’s AI Impersonator report last year, showcasing the growing threat of such tech in the hands of fraudsters.

The latest scheme involves a fictitious Amazon Prime series titled “Olympics has Fallen II: The End of Thomas Bach,” narrated by a deepfake version of Elon Musk’s voice. This fake series was reported to have been released on a Telegram channel on June 24th, 2024. It’s a stark reminder of the lengths to which scammers will go to spread misinformation and exploit public figures to create believable narratives.

As the Olympic Games approach, it’s crucial to stay vigilant and question the authenticity of sensational claims, especially those found on less regulated platforms like Telegram. Always verify information through official channels to avoid falling victim to these sophisticated scams.

As we approach the Olympic Games, it’s crucial to stay vigilant and question the authenticity of sensational claims, especially those found on less regulated platforms like Telegram. Always verify information through official channels to avoid falling victim to these sophisticated scams.

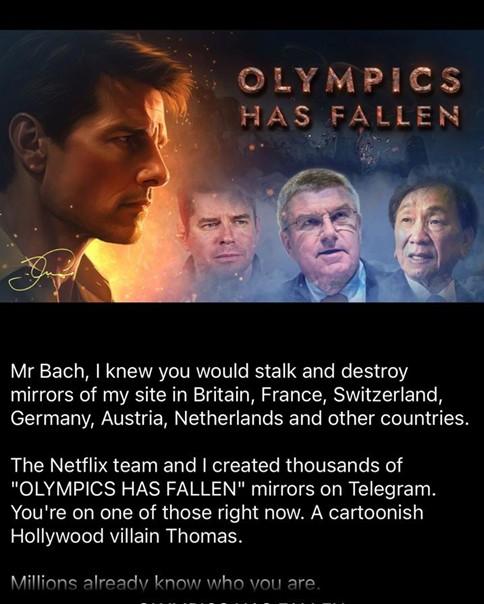

Cover Image of the series

This series seems to be the work of the same creator who, a year ago, put out a similar short series titled “Olympics has Fallen,” falsely presented as a Netflix series featuring a deepfake voice of Tom Cruise. With the Olympics beginning, this new release looks to be a sequel to last year’s fabrication.

Image and Description of last year’s released series

These so-called documentaries are currently being distributed via Telegram channels. The primary aim of this series is to target the Olympics and discredit its leadership. Within just a week of its release, the series has already attracted over 150,000 viewers, and the numbers continue to climb.

In addition to claiming to be an Amazon Prime story, the creators of this content have also circulated images of what seem to be fabricated endorsements and reviews from reputable publishers, enhancing their attempt at social engineering.

Fake endorsement of famous publishers

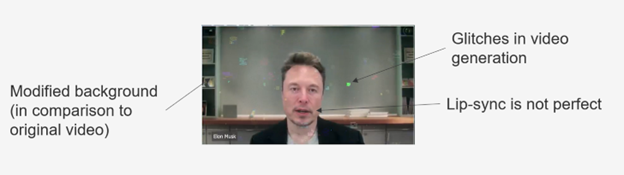

This 3-part series consists of episodes utilizing AI voice cloning, image diffusion and lip-sync to piece together a fake narration. A lot of effort has been expended to make the video look like a professionally created series. However, there are certain hints in the video, such as the picture-in-picture overlay that appears at various points of the series. Through close observation, there are certain glitches

Overlay video within the series with some discrepancies

The original video appears to be from a Wall Street Journal (WSJ) interview that has then been altered and modified (noticed the background). The audio clone is almost indiscernible by human inspection.

Original video snapshot from WSJ Interview

Modified and altered video snapshot from fake series

Episodes thumbnails and their descriptions captured from the telegram channel

Elon Musk’s voice has been a target for impersonation before. In fact, McAfee’s 2023 Hacker Celebrity Hot List placed him at number six, highlighting his status as one of the most frequently mimicked public figures in cryptocurrency scams.

As the prevalence of deepfakes and related scams continues to grow, along with campaigns of misinformation and disinformation, McAfee has developed deepfake audio detection technology. Showcased on Intel’s AI PCs at RSA in May, McAfee’s Deepfake Detector – formerly known as Project Mockingbird – helps people discern truth from fiction and defends consumers against cybercriminals utilizing fabricated, AI-generated audio to carry out scams that rob people of money and personal information, enable cyberbullying, and manipulate the public image of prominent figures.

With the 2024 Olympics on the horizon, McAfee predicts a surge in scams involving AI tools. Whether you’re planning to travel to the summer Olympics or just following the excitement from home, it’s crucial to remain alert. Be wary of unsolicited text messages offering deals, steer clear of unfamiliar websites, and be skeptical of the information shared on various social platforms. It’s important to maintain a critical eye and use tools that enhance your online safety.

Recommendations When Viewing Online Content

McAfee is committed to empowering consumers to make informed decisions by providing tools that identify AI-generated content and raising awareness about their application where necessary. AI generated content is becoming increasingly believable nowadays. Some key recommendations while viewing content online

- Be skeptical of content from untrusted sources – Always question the motive. In this case, the content is accessible on Telegram channels and posted to uncommon public cloud storage.

- Be vigilant while viewing the content – Most AI fabrications will have some flaws, although it’s becoming increasingly more difficult to spot such discrepancies at glance. In this video, we noted some obvious indicators that appeared to be forged, however it is slightly more complicated with the audio.

- Cross-verify information – Any cross-validation of this content based on the title on popular search engines or by searching Amazon Prime content, would very quickly lead consumers to realize that something is amiss.

Note: McAfee is not affiliated with the Olympics and nothing in this article should be interpreted as indicating or implying one. The purpose of this article is to help build awareness against misinformation campaigns. “Olympics Has Fallen II” is the name of one such campaign discovered by McAfee.